I field messages and requests all week long from parents who want the latest tools for keeping their kids safe online. They ask about everything from YouTube to Instagram to Snapchat and want apps that will monitor social media use, block adult content, and limit screen time. While there are resources to help parents in each of those departments, some apps just aren’t intended for your younger child. Unfortunately many parents have a real problem giving in to that fact.

Streaming Videos

Let’s look at YouTube as our first example. The video app was created in 2005 as a place for anyone to upload short videos to share with their friends. Google purchased YouTube in 2006 and Social Media became popular soon after, rocketing YouTube to the successful streaming platform it has become. The site is loaded with videos from filmmakers, vloggers, video gamers, makeup artists, geeks, professionals, educators, ministers, animators, artists, basically any category you can think of. It has evolved into an immovable force on which there are 300 minutes of footage uploaded every single minute. YouTube has come under fire for some of their content being too mature or sensitive and so they’ve employed algorithms to keep tabs on inappropriate videos. They also released n app for children called YouTube Kids. This app has also seen its share of controversy after YouTube has been unable to keep sensitive material from showing up in videos on the app.

YouTube obviously wasn’t intended for young viewers. It is a site that is populated primarily by videos uploaded by its users. Some companies that make content for kids use YouTube but this is a choice by these companies in response to the popularity of the platform. It’s an attitude that says: “Kids are there, so we should be there too.” The goal is to reach the audience already there, not necessarily to build an audience on YouTube. There are no real parental controls (safe search is mostly useless) and videos that are labeled as kid friendly are done so without any human eyes ever seeing the entire video. The only time a content reviewer sees the video is when enough users of the site have flagged it as inappropriate. Allowing your kids to watch YouTube on their own is a risk that many parents don’t even realize they are making.

What about Social Media?

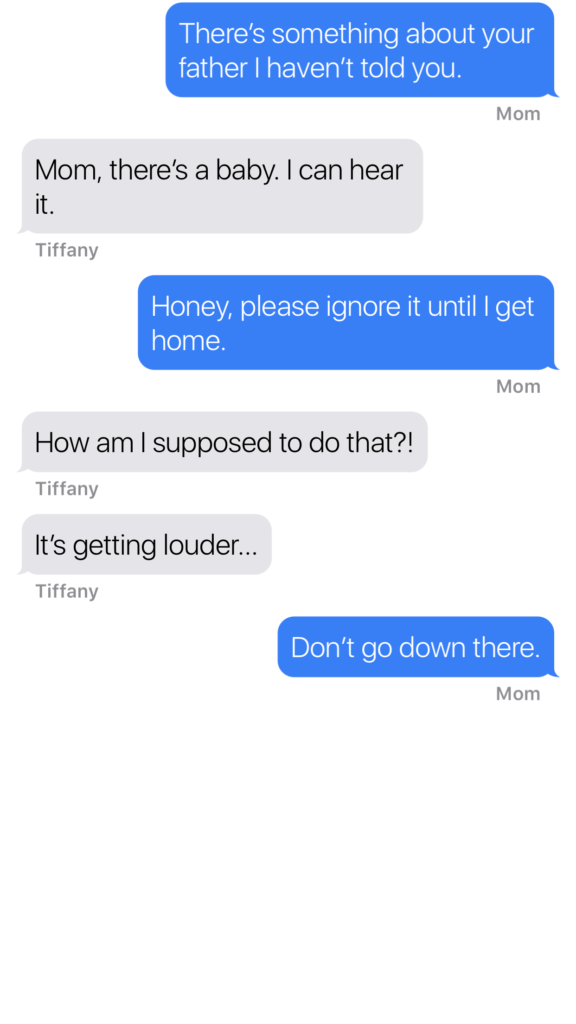

Snapchat, Instagram, or Facebook are all the same. They, like YouTube, feature content created and posted by the users of the service. This “User Generated Content” can vary from political or religious views, to silly cat videos or memes, and random personal updates that mean nothing to anyone. People also post updates on their serious mental health issues, they share about their plans to harm themselves or others, the post images of themselves in compromising situations, and that’s just what people post publicly. Private messaging contains content that people post when they think nobody except those they trust is watching. Private messaging is how predators groom their victims. It’s how the out of control teenage boy convinces the girl to send him inappropriate pictures of herself. Social Media is intended to be a place to connect with people, some you may know, some you don’t. It is meant to be a public forum and that which is meant to be private, is meant to be completely private. This is where the problems come in when parents ask for ways to monitor their kids social media.

Age Rating vs Terms and Agreements

I see a lot of parents giving their kids access to social media and other online activities when they reach the age of 13. This is based on the fact that the terms and agreements that these sites have you approve before making an account list 13 as the minimum age to use their service. A common mistake parents make is thinking that this age is meant to protect their kids from content on the site when, in fact, it’s intended to protect the company from having data and information on kids under the age of 13. COPPA laws say that companies can’t collect and use information of kids under 13 without parental consent. If a company says you can’t use the site if you’re under 13 then they can do whatever they want with all of that data and if your kid is underage, it isn’t their fault. You ignored the Terms and Agreements when you allowed them to use the site.

Age rating is the age recommendation you’ll see in the app store when you are downloading and app. This age restriction is based on the actual content in the app, not any legal requirements for the company. The usual standard is that apps populated by user generated content are rated 17+. This is because the company can’t guarantee that what is seen on their product won’t be considered adult content. When we allow our kids to use apps that contain user generated content we are allowing them to be subject to the opinions, behavior, and whims of everyone else who uses that app.

Parental Involvement Before Parental Control

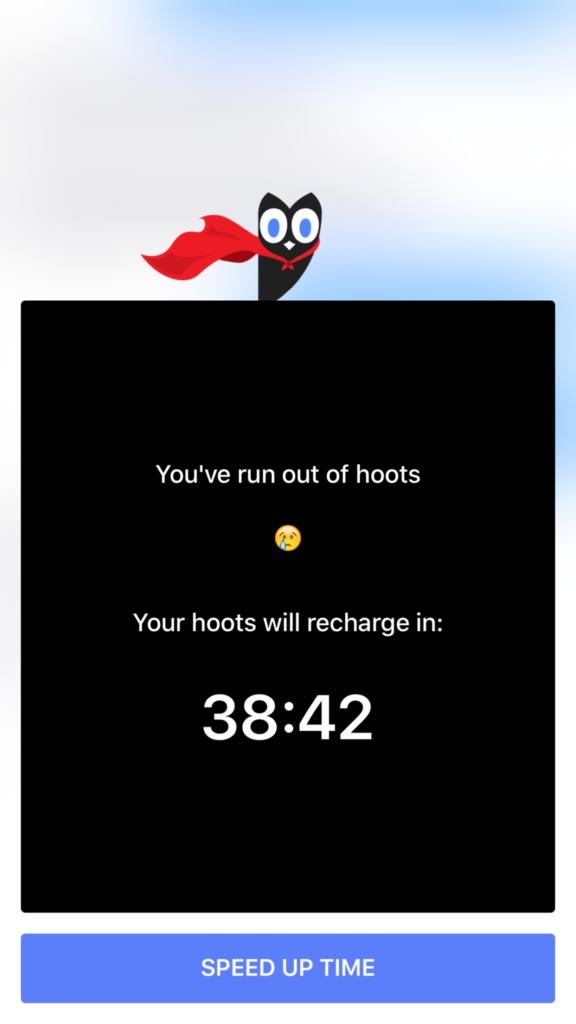

When I am asked to help parents protect their kids in apps that are obviously not made for children I feel like I’m being asked to give parents a suit their kids can wear to protect them while they play in a burning building. I get it. It isn’t easy to tell your kids they can’t do something they want to do. “My friends are all on Snapchat.” or the one that irritates me to no end, “The teacher/coach says I have to use Facebook to get the homework/practice schedule.” Sometimes we just have to say no. It is difficult to set the boundaries and limits that keep our kids safe but if we have the right attitude about what we’re protecting them from it becomes easier. Social Media, YouTube, video games that are rated M for mature, non of these things are intended for people under the age of 17 and when we allow our kids to use these products, we open them up to a world that is meant for adults.

It is difficult for algorithms to catch nudity or violence in uploaded videos. Social Media sites and private messaging apps go to great lengths to keep prying eyes from seeing what is being sent. This makes parental monitoring software hard to develop. Unfortunately some burning buildings are just too dangerous and there isn’t much that can be done to protect you if you’re inside. If you aren’t ok with your child seeing content that is meant for grown ups then I recommend thinking about uninstalling that app instead of trying to find software that doesn’t it allow it to do what it was intended to do.

The courts will decide whether or not Apple is guilty of unfair business acts but as parents, we have to look closely at the question of responsibility with tech. Yes, there is a level of concern that is acceptable for a company to consider when they are producing a product, however, the first layer of responsibility should lie with parents. No, your kids shouldn’t text and drive and they are hearing that from all over. The question is “are they hearing it from you?” Are they seeing something different from you? If you are texting and driving while your kids are hearing the message that it’s wrong and dangerous, then you are removing a layer of education that can be critical to your child or teen’s safety. Our example is very important.

The courts will decide whether or not Apple is guilty of unfair business acts but as parents, we have to look closely at the question of responsibility with tech. Yes, there is a level of concern that is acceptable for a company to consider when they are producing a product, however, the first layer of responsibility should lie with parents. No, your kids shouldn’t text and drive and they are hearing that from all over. The question is “are they hearing it from you?” Are they seeing something different from you? If you are texting and driving while your kids are hearing the message that it’s wrong and dangerous, then you are removing a layer of education that can be critical to your child or teen’s safety. Our example is very important.