The Story So Far

It is a long and arduous story, the tale of Apple shutting down parental control apps. Some say it was done to protect Apple’s investment in their own Screen Time app while others believe Apple truly has the wellbeing of their customers at heart. It is hard to look at this story from any one angle alone without making a blanket statement about the opposing side. This is why I have taken a look at all sides and wish to help you, parents, understand what is happening in this strange new war.

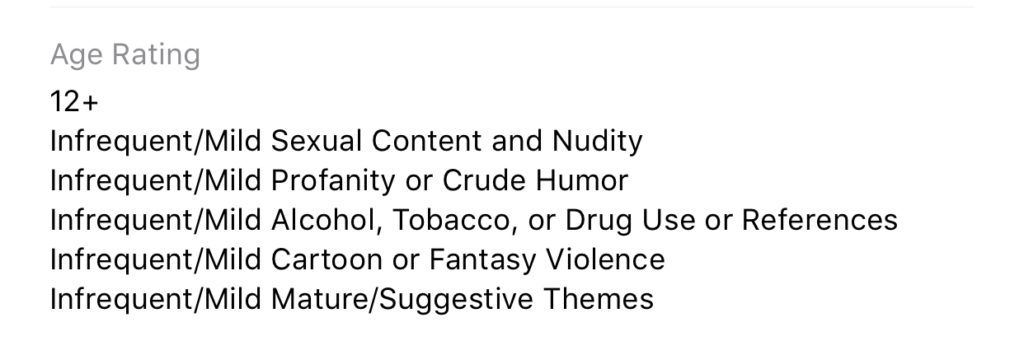

Last fall, after announcing the release of iOS 12 which feature their new controls app “Screen Time,” Apple began to deny certain parental control apps access to the app store. Apparently, citing the fact that Apple doesn’t allow apps to use any method to block other apps (a pretty important feature in a parental control software.) Eleven of the top seventeen parental control app developers such as Mobicip, OurPact (the top Parental Control app in the app store,) and Quistudo were all in communications with Apple for months about their apps being removed and what it would take to get reinstated. Apple’s comments seem to have been centered mostly around the removal of apps and the use of something called MDM or Mobile Device Management. They stand on the fact that MDM allows access to information that should remain private. Developers of the Parental Control apps are saying that Apple said nothing about privacy in any of their communication about getting their apps reinstated. This is causing a bit of concern for developers, media, and parents alike.

Even more information about MDM in the video and podcast.

Recently, the New York Times released an article about Apple’s removal of the parental control apps from the app store alluding to the possibility that the move was to eliminate competition for Apple’s Screen Time or even to keep people from using apps that cause them to use the iPhones less often. We are obviously getting a lot of they said/they said back and forth with this story and there is more to come (law suits and such) but here is what I think it all means for parents.

What Parents Should Know

Above all it is important for parents to understand that there is no such thing as the perfect parental control app. The free ones are likely selling your data and the paid apps are usually using some sort of loophole to even work properly. Apple uses a pretty closed approach to their app store, only allowing a very small “sandbox” for developers to work in. This causes many of the parental apps in question to fall short of complete and total control. The MDM allowed for a bit more of that control but without that access, many of these apps are simply useless. I do believe that parental control apps should be held responsible for what they do with the data that they collect. Apple takes data security and privacy very seriously. This is what they have said is at the core of their stance against some of these apps. Apple must protect the privacy of their users, it is a major part of their platform and what sets them apart from their competitors.

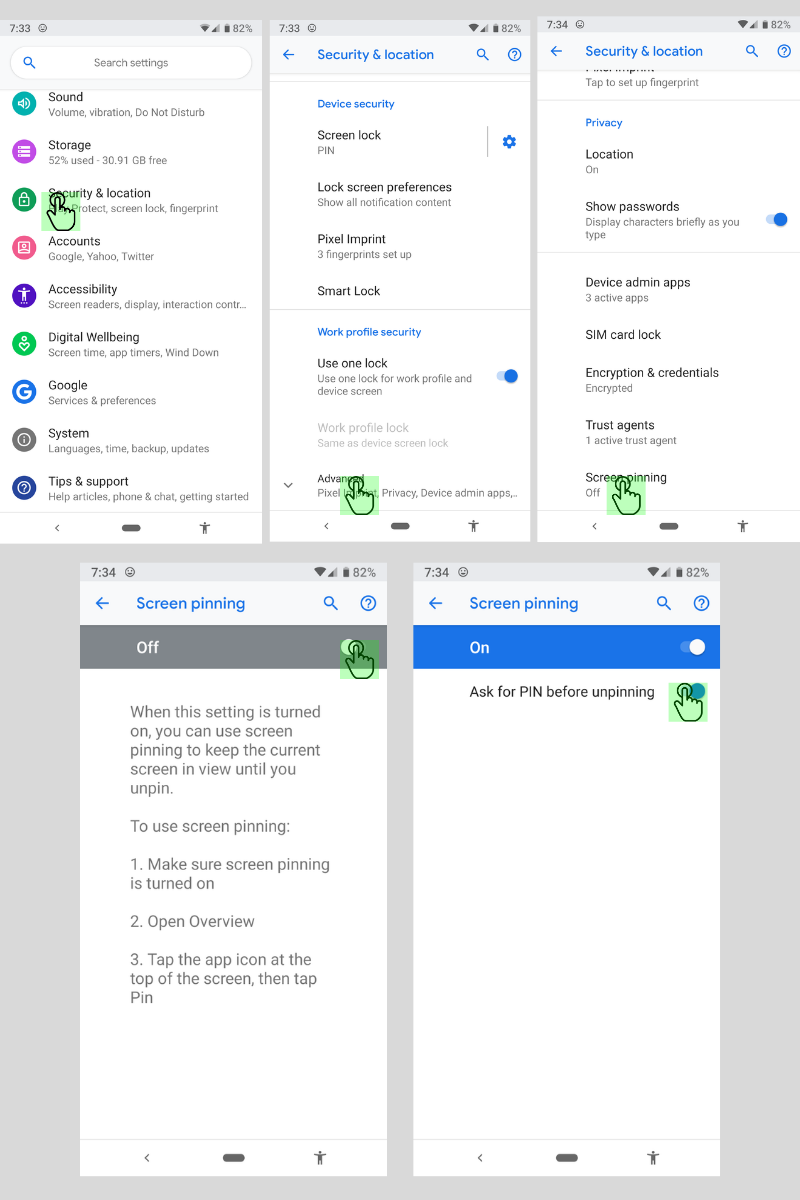

What does this mean for us as parents who want to protect our kids? First of all we have to remain vigilant to keep our kids safe online. Use some sort of network level parental controls. Whether you use Circle or something else that is built in to your router, it is a lot easier to set up filters that block your entire network than to set it up on each device. Also, you can just learn and use the built in parental controls that Apple and Android have created. Screen Time isn’t perfect (as I said, none are) but it is pretty good. Use the resources you have as well as a good, healthy environment of conversation and security to keep your kids using tech properly and discussing it with you regularly.

Until Apple makes it easier for software developers to access user behavior, any built in parental control options will be bettor for iPhone and iPad users. Screen Time is currently a bit limited but is is a lot better than nothing and will work for most families. The best part is that the stance Apple has taken for privacy will also apply to users who have set up Screen Time. Any account that you have set up for your child will be treated as a child’s account and Apple’s terms state that their data will be treated as such also. Maybe your favorite Parental Control app is a part of this whole drama. If so, hang in there and set up something you can use because this whole story isn’t over. I’ll keep you updated as more happens.

For even more, listen to the podcast episode below:

Podcast: Play in new window

Subscribe: Apple Podcasts | Spotify | RSS