I spent five days walking around the show room floor and attending conference sessions at CES2020. It is the largest trade show in the world. I saw all kinds of technology from smart cars, smart homes, and toys and ed products for kids. These people are why I am at CES. I’m there to learn how their products can benefit our kids in the future. Tech is super helpful and useful as a tool for education, entertainment, and development. Many kids are learning in ways they couldn’t before, children are getting opportunities they didn’t have before because of vr and ar classrooms. Technology is and always will be a part of our lives. The world is getting more and more tech-centric. The worst thing about CES2020 seems to be that parent’s concerns about the amount of tech in their kids’ lives are being ignored.

The Worst Thing about CES2020

I heard a lot of mixed messages at CES this year. Especially at the Living at Digital Times “Family Tech Summit.” It has become increasingly frustrating to listen to software developers and hardware engineers talk about how their new technology is going to change the world. While much of this technology is very neat, and as mentioned, can be helpful. There are also a small percentage of people on the stages at CES warning us that our kids are becoming too dependent on this technology. Parents and teachers are getting concerned because they feel like technology is moving far faster than they can keep up. The experts at CES don’t seem to understand the anxiety caused by new, “world changing,” technology being announced every single year.

Most technology being announced at CES is a new take on the same thing we’ve had for the past ten years. I am walking in to the Family Tech Summit expecting to hear about what new products will be best for our kids. Instead I am hearing what will be best for these developers and companies. How to market and close sales with their new products. I did hear from a few people about ways to protect our kids on the technology we allow them to use.

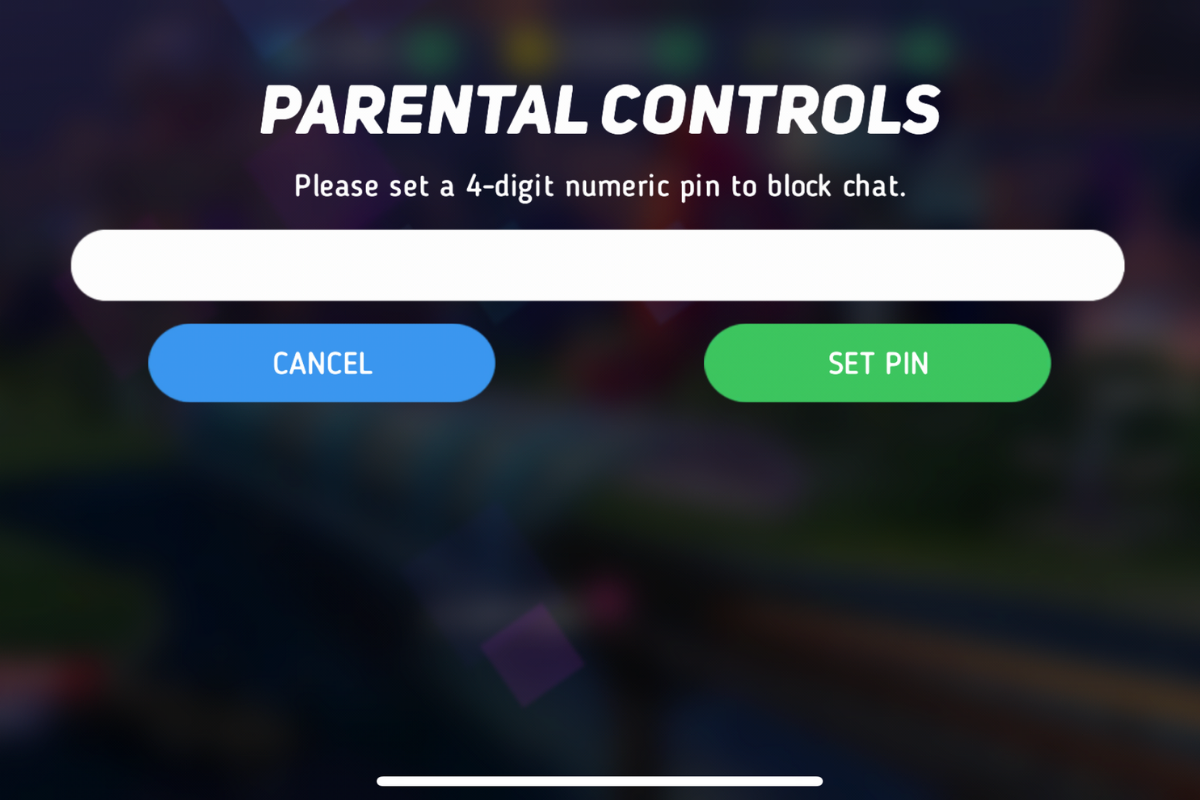

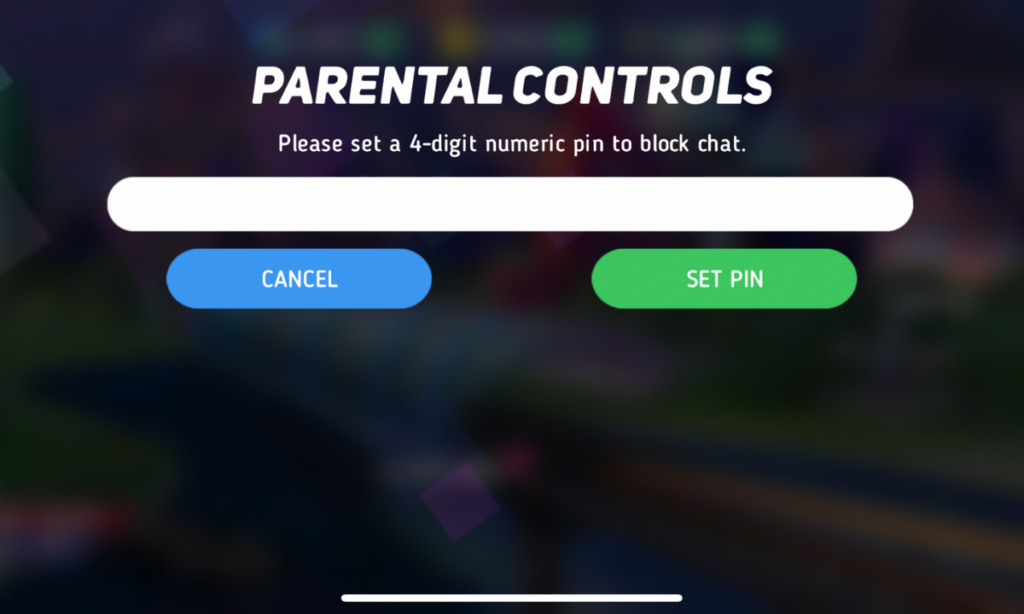

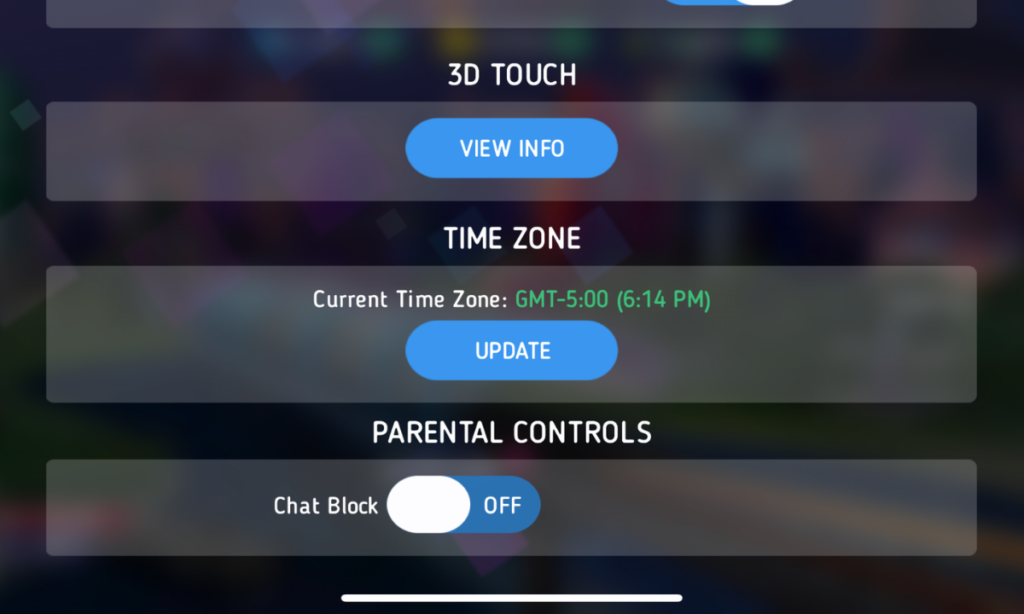

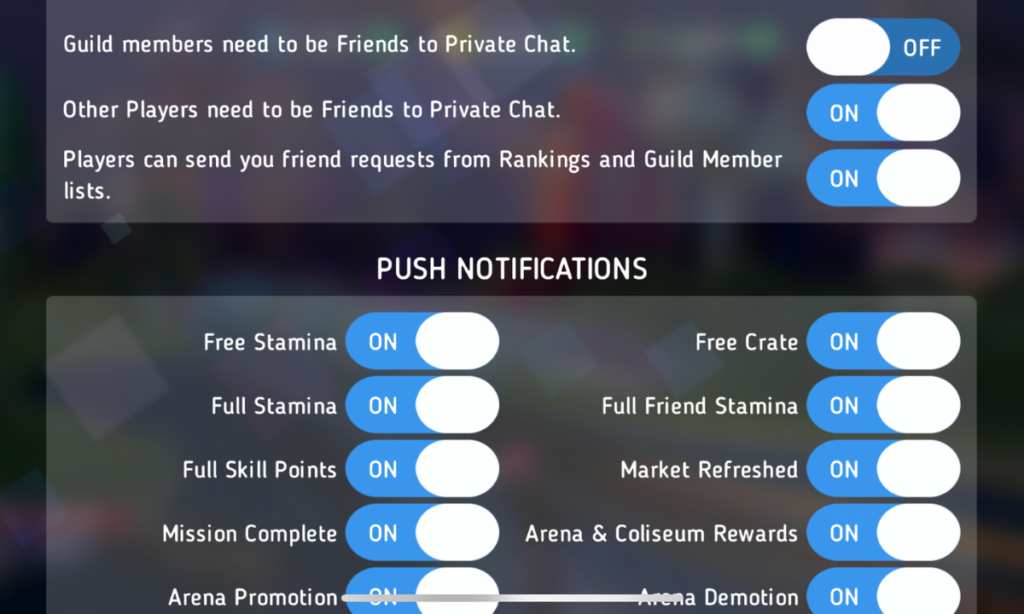

Unfortunately they were given a small amount of time. They were followed by someone who just got on stage to celebrate the latest voice control tech. This “expert” explained how great it is for our kids. He marginalized parents’ concerns by calling them misguided. then he touted the fact that parents seem to be concerned but don’t take action to protect their kids. He ignored the fact that companies make their products and advertise them as safe. They build in parental controls that are weak and hard to set up. Then they wonder why they show up in the news when a kid comes across adult content on a smart speaker or is visited by a stranger on their in-room nanny cam.

It wasn’t all bad.

There were highlights at CES2020, though. Dr Amanda Gummer with the Good Toy Guide, spoke of using tech to encourage kids to play and learn. Sean Herman, author of “Screen Captured,” shared about his own kids and how their attention to screens caused him to start Kinzoo. Kinzoo is a messenger app that “turns screen time into family time.” I met Carrol Titus, founder of GoldenPoppy Inc. who is making augmented reality games to teach physics, programming, and positive self awareness. I enjoyed speaking with Ahren Hoffman and Sue Warfield from the American Specialty Toy Retailing Association, “ASTRA.” We talked about the lack of attention to giving parents tools to learn and use tech wisely and the benefits of kids playing off of screens. Especially young children.

Everyone can say what they want about screen time and the benefits or risks. The truth I see is ‘that technology should enhance our play and education. It shouldn’t replace it. Parents aren’t freaking out because their kids are spending too much time watching educational videos. They’re not concerned about them playing apps that teach them to read or do math. The concern is the unstoppable flow of entertainment that comes flying at our children at toy stores and app stores. Entertainment that has no intention of teaching anything, just using up your child’s time and attention to show them ads or sell them access to more entertainment. I understand that many want to see tech become the new norm for education, recreation, entertainment, and everything else.

The issue is that we currently aren’t promoting balance. Surely not at CES2020, definitely not in our app stores or on the shelves of our retailers. Once again, it falls to us as parents to take the step towards a healthy attitude toward s tech. Digital wellness is our responsibility and the more I hear from app developers and toy makers, the more I am sure they won’t be taking it seriously, not really, so we have to.

If you’re concerned about what your kids are doing online, be sure to check out Accountable2You.com. This software is my favorite accountability software and will help you keep a close eye on the websites your kids view.

Podcast: Play in new window

Subscribe: Apple Podcasts | Spotify | RSS