Tik-Tok is at the center of a controversy surrounding the exposure to predators and child pornographers through live streaming on their app. One in twenty children who use live-streaming apps have been asked to take off their clothes according to a study by the UK’s Children’s Charity NSPCC. Originally called Musical.ly, Tok Tok claims to “empower everyone to be a creator directly from their smartphones, and is committed to building a community by encouraging users to share their passion and creative expression through their videos.” Their mission statement sounds like they are building a place for our kids to stretch their creative muscles and build a supportive audience but in reality it is exposing them to potential danger.

Sexual exploitation is only a part of the issue, there are popular hashtags on the app that highlight self harm and eating disorders. Tags like #thinspo (thinsporation) feature videos of children as young as eight showing their rib cages through their skin and proclaiming that they are inspiring to others who desire to be thin. Suicide and self harm are also featured on the app with complete with encouragement to hurt yourself and instructions on how to do so. Tik-Tok says you have to be 13 to use the app but as we have shared multiple times on this site, that age exists to protect the company from legal action concerning the collection of children’s data, not to protect your children from content on the app.

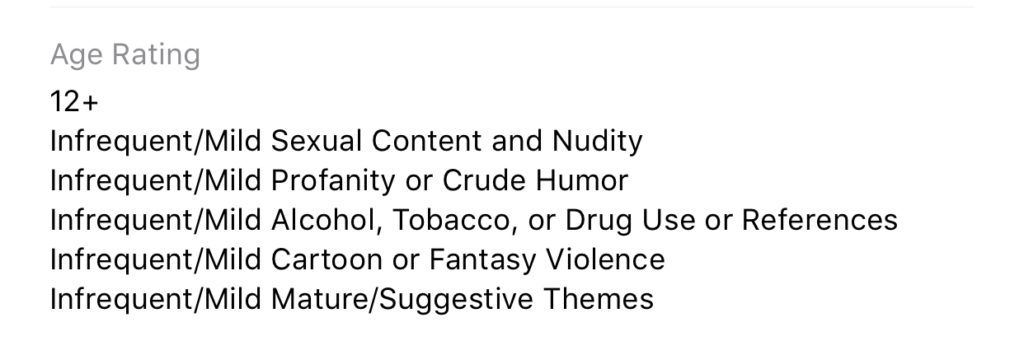

While the app is rated 12+ in apps stores in the U.S. the reasons listed for the rating prove to be, in fact, very mature. The issue, again, as I’ve mentioned, is user generated content. Anyone with a smartphone and a wifi connection can make videos and now livestream in Tic-Tok, they can also watch you perform on the app. This makes for an open, dangerous atmosphere filled with predators, adult content, scams, and violence.

What Parents Should Know

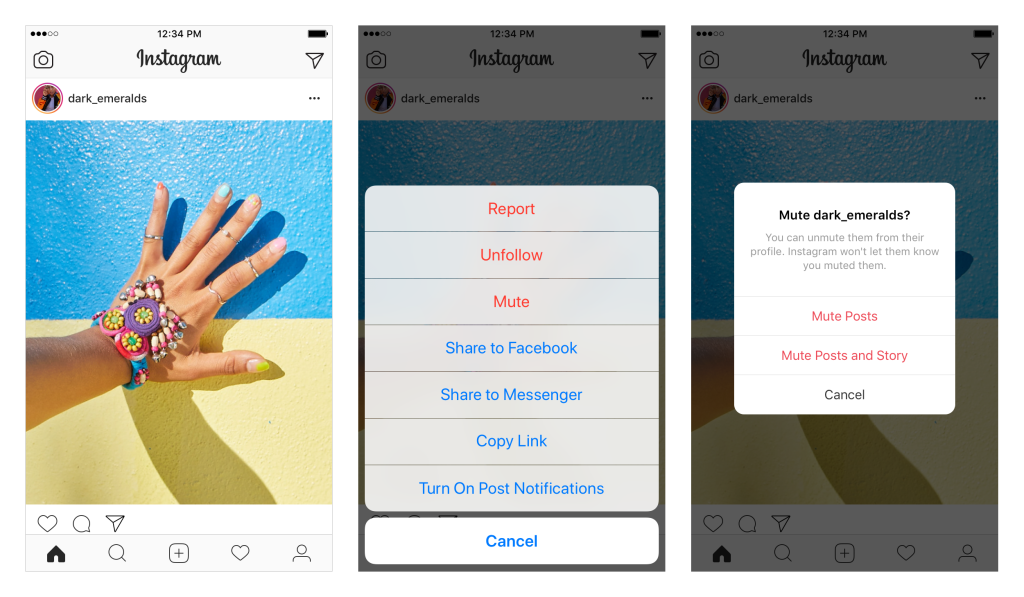

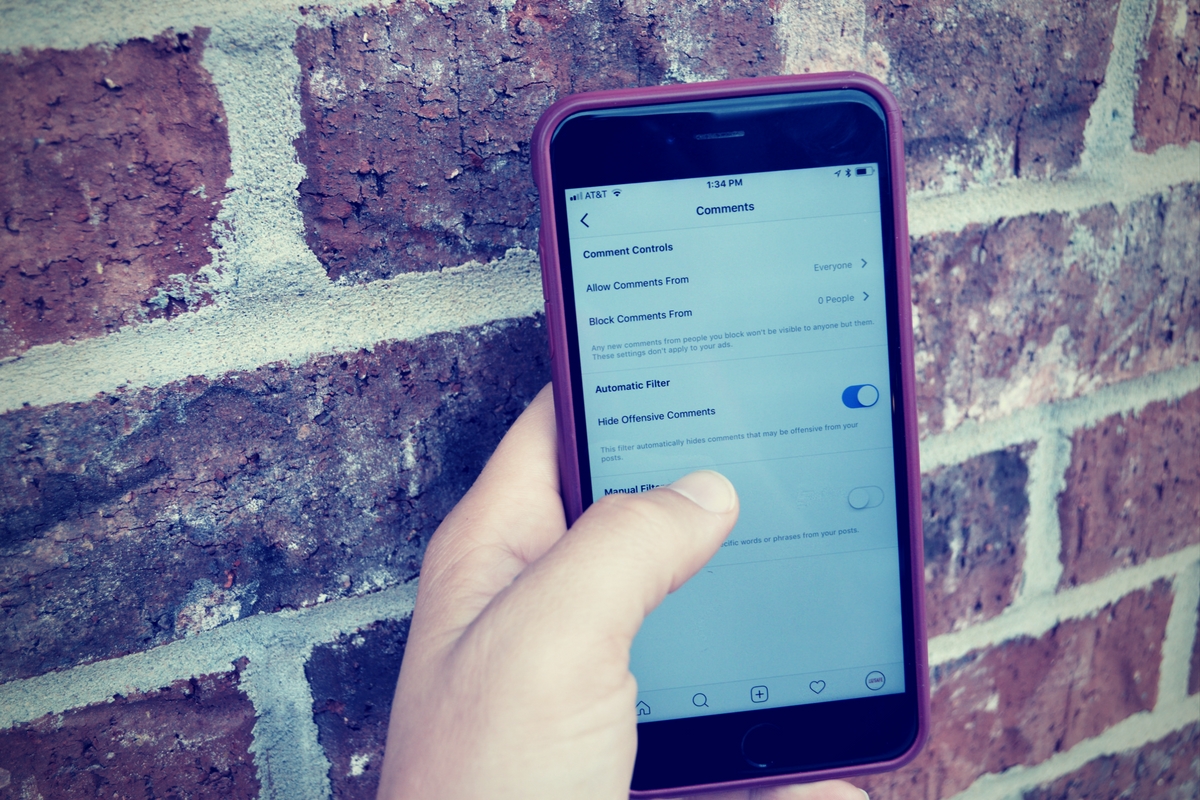

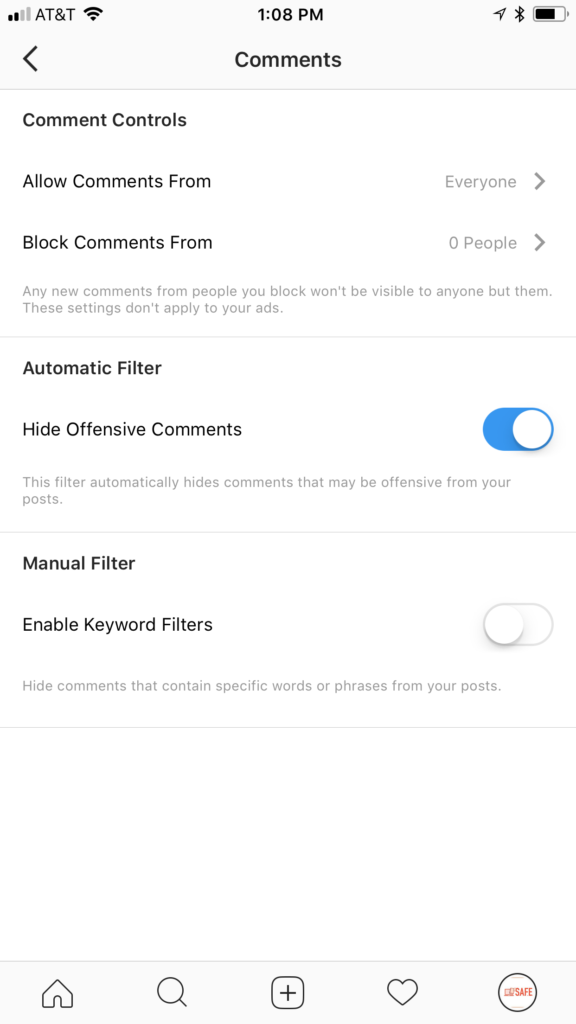

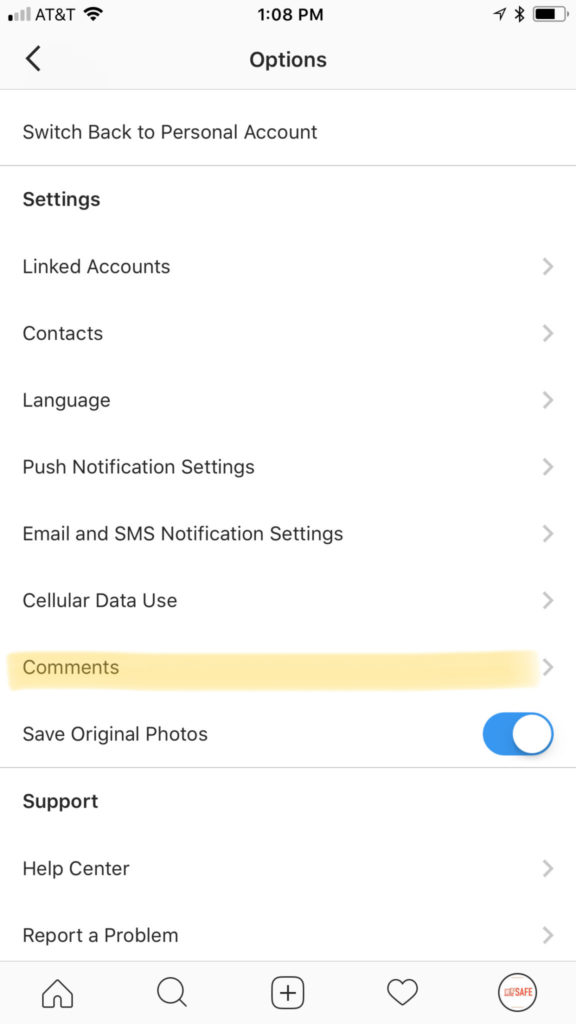

Tik-Tok says they have filters and parental controls in the app that allow you to set the app to private but all of these measures have proven to be less than effective. Kids who use the app on their own can easily come across content that isn’t age appropriate. The content restriction and time management settings in the app are password protected; they can be useful and should be set up if you allow your child to use Tik-Tok. Also be sure to turn off the ability for non-friends to comment on, share, and download (this is on by default, creepy right?) your child’s videos.

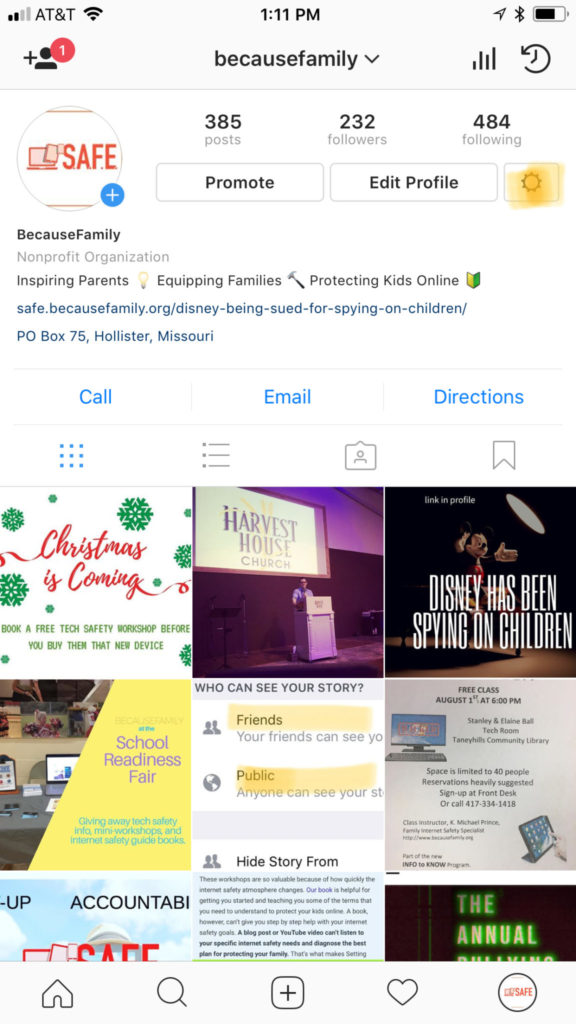

We don’t want our kids talking to strangers online. All parents understand the dangers associated with live-streaming and posting public videos to the internet. Unfortunately many parents feel that their hands are tied when it comes to keeping their kids safe on these apps and websites. That isn’t the truth, however, there are tools (some in the app and some third party) which you can use to keep them from accessing things that are dangerous. An algorithmic filter is never going to be enough, though, so it is important that we have open communication with out kids about what they are posting and seeing on apps like Tik-Tok. Also, if your child doesn’t meet that age restriction then they shouldn’t use the app.

Twenty five percent of kids talking to strangers online is a horrifyingly high statistic. It shows that while there are privacy settings and parental controls out there for parents to use, either parents aren’t using them or their kids are getting around them. I know that the privacy settings in Tik-Tok aren’t password protected so if your children want to talk to strangers on the app and they have time using the app by themselves there are ways for them to make that happen. It is important that parents take the responsibility to protect our kids online. Many media outlets are blasting these companies for putting our kids in danger but I have to be honest, you don’t blame the slide for your kid falling off and busting their face, you think of precautions that YOU can take to keep that from happening in the future.