This is a Parents’ Guide to Among Us

This guide is intended to inform parents to help them make quality decisions for their families. The rating is based on my opinion of playing Among Us and viewing others playing the game as well.

The rating below is based on the game content. Online interactions will always increase the risk of unwanted content.

Violence – 3

Language – 4

Sexual Content – 5

Positive Message – 2

Monetization -2

Total Score – 16 out of 25

(The higher the rating, the safer the game is for kids.)

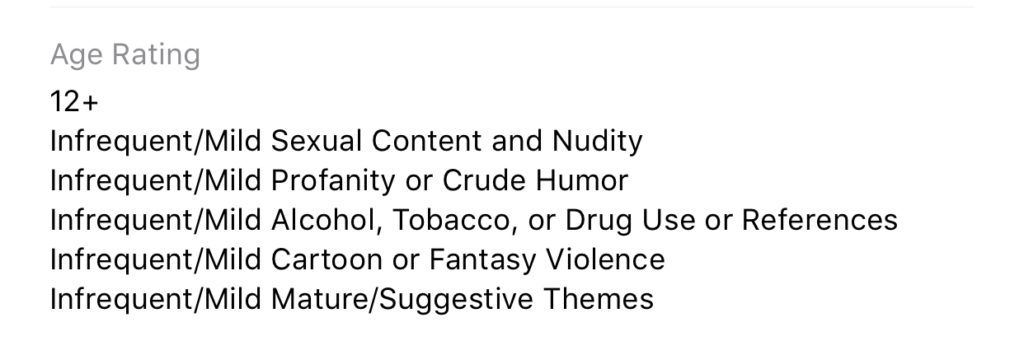

ESRB Rating – Among Us has an ESRB rating of 10+. It is rated 9+ in the app stores and Common Sense Media gives it a rating of 10+.

About the Game

Among Us is an online multiplayer game of social deduction, teamwork, and betrayal. You play as crewmates on a space ship or space station who are trying to prepare the ship for take off. You have tasks that you all must complete to win the game. The catch is that there is an imposter Among Us. This (or these) imposter(s) can sabotage your efforts to prepare your ship, they can also kill you or your crewmates. When a dead body is found, a meeting is called. The entire crew discusses what has happened and what they’ve seen that could give hints as to who the imposter is. They then all vote and if someone gets a majority of votes, they are ejected from the ship. If that person was an imposter, the crew wins, otherwise, it’s back to the ship to complete your tasks and hope the imposter doesn’t get to you first.

This game has a little bit of everything. There are simple puzzles, social interactions, mystery, and even some opportunity to be a little dark by killing your friends in-game. The graphics are simple and a bit silly, but the gameplay is so fun that it doesn’t matter. This is truly a social game and cannot be played on your own. There is a “freeplay” mode in which you can explore the map and get familiar with puzzles but it is really just for preparing to play online multiplayer.

Violence

One of the key themes in Among Us is murder. The imposter is trying to sabotage the ship by whatever means necessary. This usually includes killing crew members. You kill by simply tapping or clicking an icon when you’re close enough to a crewmate. There is then a short animation of your murder. Sometimes you slice them in half, sometimes your small companion (in-game purchase) will shoot them, and sometimes a spear-like tongue will come from you and pierce them in the face. While the animations are a bit graphic, they aren’t really bloody or gory, and they very cartoon/silly. The characters don’t look like humans, they are better described as colorful walking spacesuits so when they are killed, there isn’t much realism.

Language

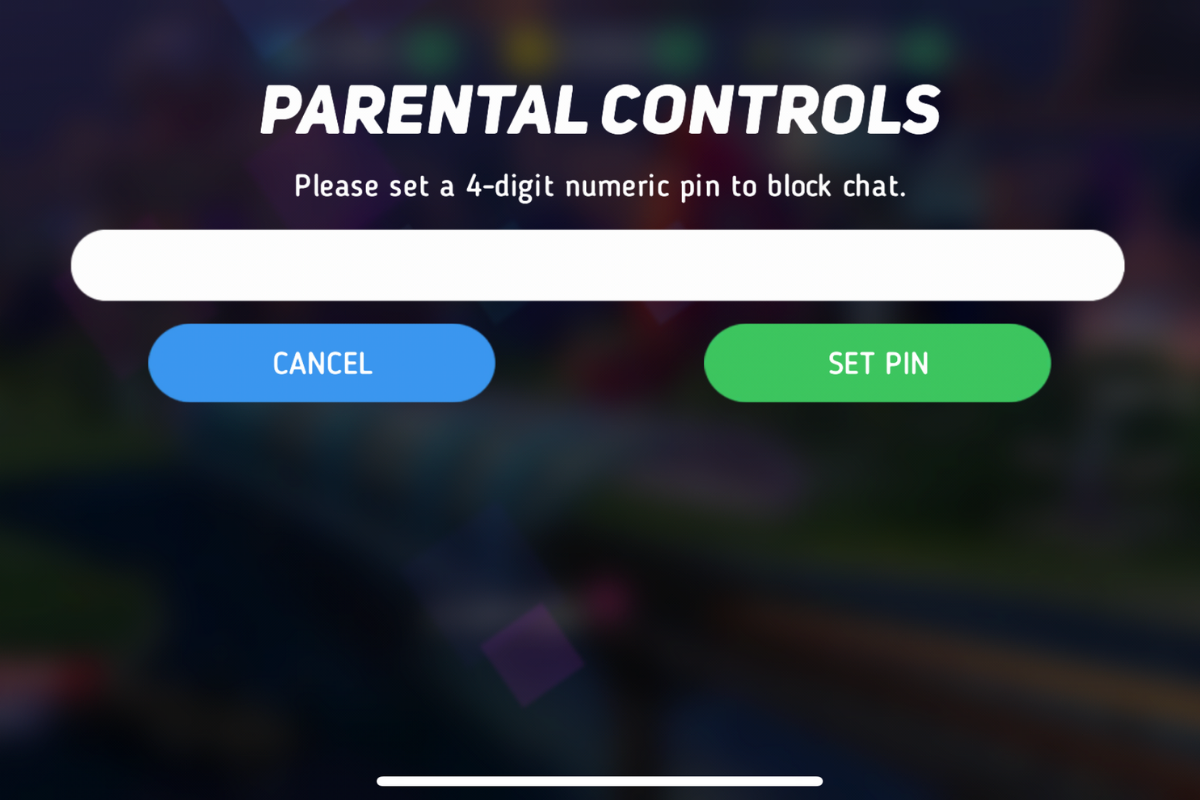

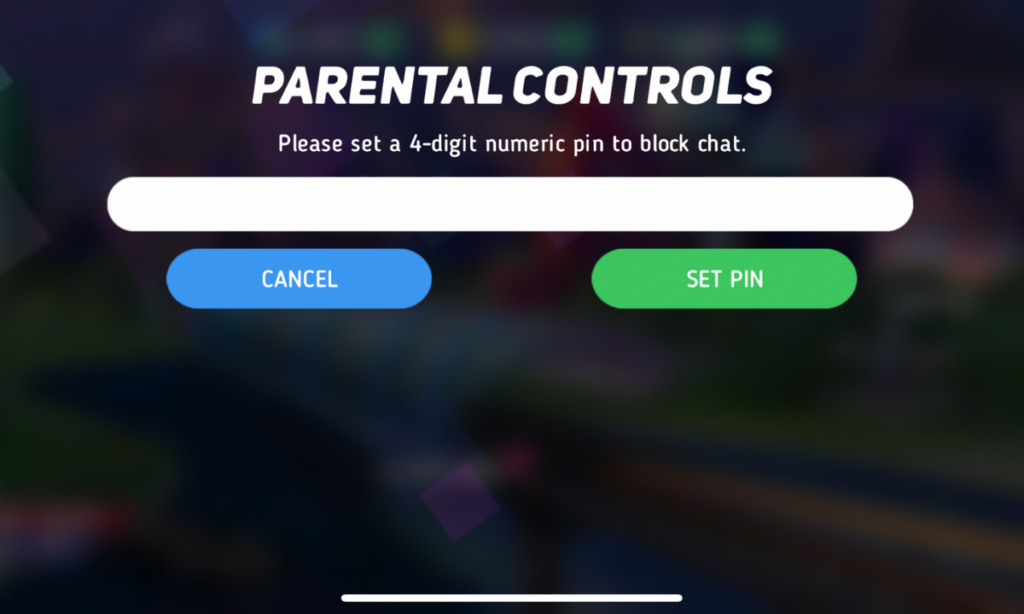

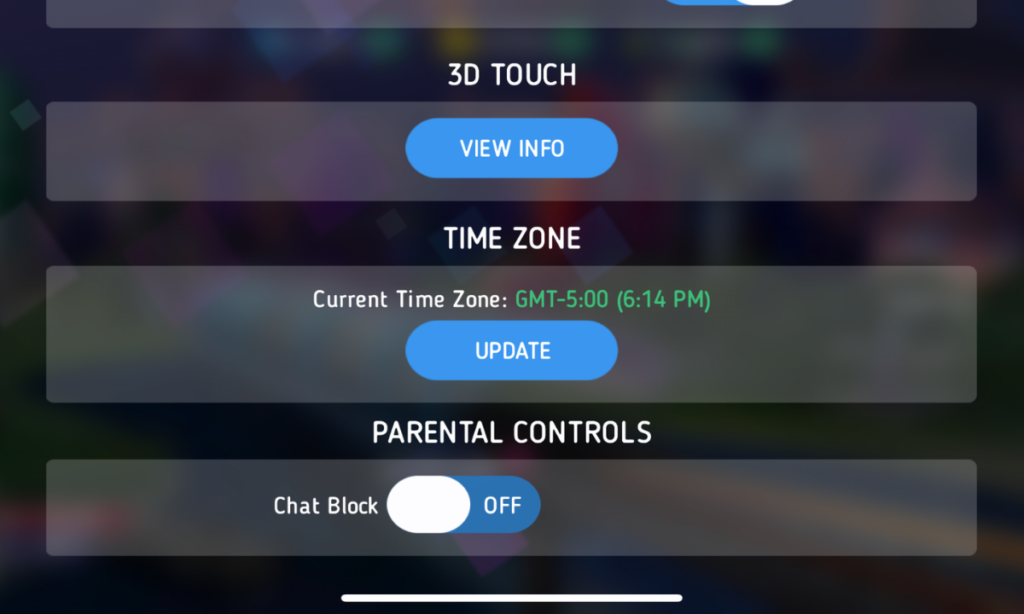

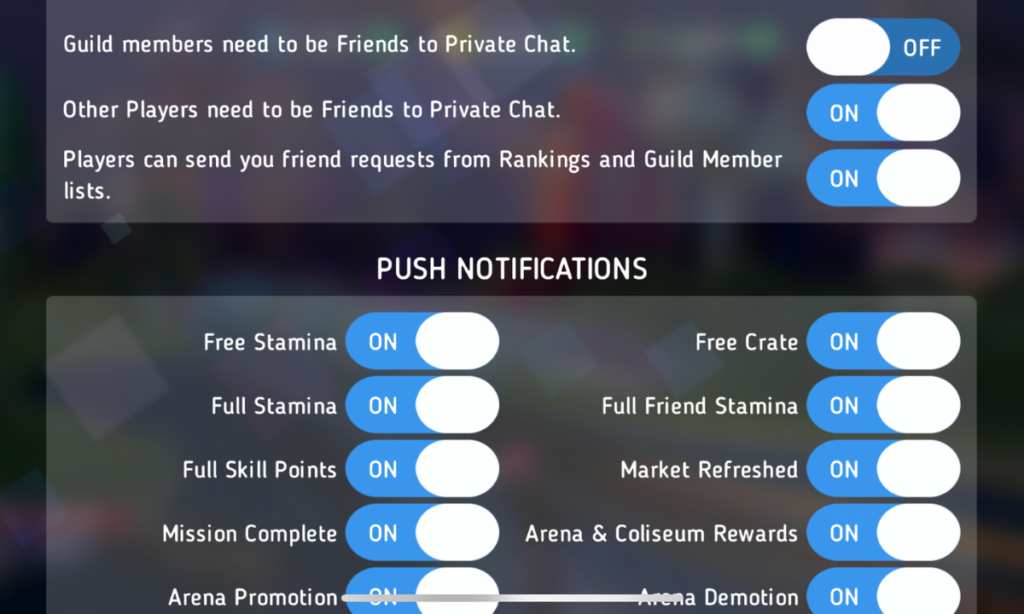

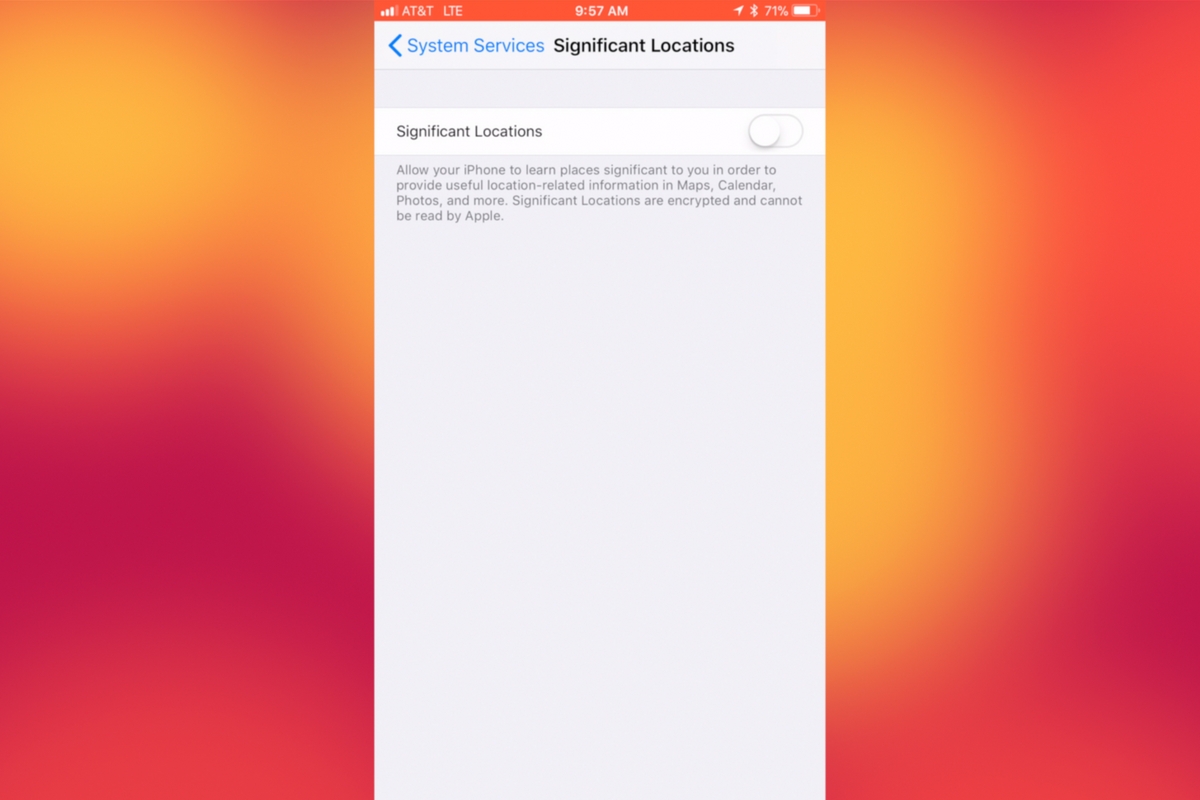

There is no dialog or narration in Among Us. This means that there is no adult language in the game itself. This is a game, however, that is meant to be played with other people over the internet. When you play a multiplayer game online you are always opening yourself up to unsavory language. In Among Us, this happens in the chat which is used to discuss murders and vote out crewmates. There is a censor mode that is on by default. This censor will use symbols to block out adult language and other inappropriate comments. This doesn’t mean that players don’t use these words. You’ll often see sentences with words asterisked out and most of us can tell by the number of symbols and the context of the sentence what words were meant. It is nice that a censor is included and on by default, it is simple to deactivate with one click/tap and is not password protected.

Sexual Content

Again, there is no sexual content in Among Us. The style of the game doesn’t lend itself to that kind of material. This is another issue, however, that is greatly impacted by online play. While the censor mentioned above will block some sexual comments, most make it through. While playing the game I saw many players with suggestive usernames. Nothing obvious but definitely innuendo. When these names were commented on in chat, however, they were mostly met with annoyance by other players who just wanted to play the game and were therefore not amused.

In other words, there will always be people who think their immature sexual jokes and comments are funny but in such a social game you’ll also find a majority of players who aren’t interested in that kind of humor. These players usually kick out or shut down the inappropriate players pretty quickly.

Positive Message

I guess we can talk about teamwork and trust here but in reality, this game is just all about having fun. There is no real moral to Among Us, it is intended to be a clone of the classic party game Mafia but set in space. Playing with friends is easy through their local or private game settings and this allows for kids to have fun with friends even though we can’t be around each other all of the time these days. I think this is what made Among Us the breakout game of 2020 even though it has already been released for two years.

Monetization

Among Us does have in-game purchases but they aren’t game-changing. You can buy packs of costumes, skins, and even pets. The prices are between $1 and $3 per pack and the game is definitely playable without spending more than the $4.99 it cost on the PC. The mobile version (free for Apple and Android) has ads that can be removed for $1.99. I recommend removing these ads because some of the games advertised should, in my opinion, be rated for adults only.

What Parents Should Know

Among Us is a game that I have been playing quite often lately. It is easy to pop in and do a ten or fifteen minute round and then log off. I have played in public rooms with friends as well, that was quite fun as we were able to work together (trying not to cheat) to complete tasks and win. It can be a time drainer as you always want to play another round. I find myself saying “one more round” a few times before I actually quit the game. Like Fortnite or other online multiplayer games, kids aren’t going to want to drop out in the middle of a game so giving them a warning about getting off their screen will be better than saying, “Put it away, now!” Trust me, you’ll have less conflict if you say “Be finished after this round, alright?” and then hold them to that.

The only real danger in this game is from strangers online. While that is always a concern with online multiplayer games, rounds are so short and fast-paced in Among Us that there isn’t much time for “grooming” or bullying especially since there is no private or direct messaging. You can stay in the same “Lobby” to play with the same people but it is so easy to back out and go into another game if you need to that I wouldn’t expect too much trouble from people in chat in Among Us.

As with most games, my recommendation is that parents understand Among Us, how it works, and what their kids like about it. Know who they are playing with online and if they are playing with strangers, be sure they feel comfortable coming to you if they see something that makes them feel strange. This game is simple enough and quick enough that many parents should be able to play along with their kids some as well. Do this. It would be really fun for you to get into their world a little bit, plus you may just enjoy the game yourself.

Podcast: Play in new window

Subscribe: Apple Podcasts | Spotify | RSS