I had the opportunity to speak at a conference last week that was full of educators and school administration. They were extremely excited about the things I had to share, they loved learning about ways to protect their students online, and they were generally interested in the statistics and facts about online dangers. They all, however, had one major complaint. “Parents just don’t seem to care.“

That’s right! Teachers, administration, afterschool program leaders, and even librarians want to help kids learn the best way to use their tech devices. Everyone is concerned about overuse and too much screen time. Nobody wants kids to end up on the wrong websites or being communicated to by the wrong people. They all want kids to be protected while they’re on school property but they know that that is only a very small amount of time compared to the time they spend online at home.

This all falls on parents. There is no one who has as much influence over their children as the parents who raise them. Teachers, coaches, pastors, and mentors all do what they can and have a real heart to protect your kids but if mom and dad aren’t taking part then it is an uphill battle.

Here are three kinds of technology parents and where they mess up.

- The ”Do as I say, not as I do.” parent.

I’ll never forget my neighbor’s grandfather when I was a child. He smoked like a chimney, several packs of cigarettes every day. When we would be outside playing with our friends, it never failed, he would come out light up a cigarette and immediately tell us all, “Never start smoking, it’s really bad for you.”

I get addiction. I understand that there are things people can’t just give up. But this “do as I say not as I do” attitude can be very harmful to our kids. When it comes to technology most of us lift our phones about every 10 seconds on average. We spend 4 to 6 hours per day creeping Facebook, watching YouTube, and posting to Instagram.

Even as a 10-year-old kid I realized how weird it was that this man was standing there, chain-smoking cigarettes, and telling us not to do the same thing. Our kids get confused when we tell them they can’t have any more screen time while we are looking at our phone just like we have all day long. Put it down, look up, and set a good example for your children.

- The “I’m super busy.” parent.

I remember being told to play outside because my mom needed a few minutes to her self. We would go play at friends’ houses and every now and then a friend would say that his mom wouldn’t let us play there today because she needed the house to her self. Parents have always needed time without kids running around asking for things and getting on their nerves. The difference is that when I was a child I was going to the homes of people my parents knew. Now we set our kids down in front of devices on which they can communicate with the entire world.

Using Netflix or YouTube as a babysitter is just simply a bad idea. It can be useful if you know how to set it up properly but most of the time parents know less about these sites and apps than their kids do. I get that you’re busy. I understand you have things you have to get done. It’s just very easy to allow your kid to be on the screen for 4 to 6 hours before you realize how long it has been. Use some sort of app that sets a time limit for your kids’ Screen time. That way it doesn’t fall to you to remember when they their time is up. It automatically kicks them off and you can tell them to get outside and have some fun in the sun.

- The “I have great kids.” parent

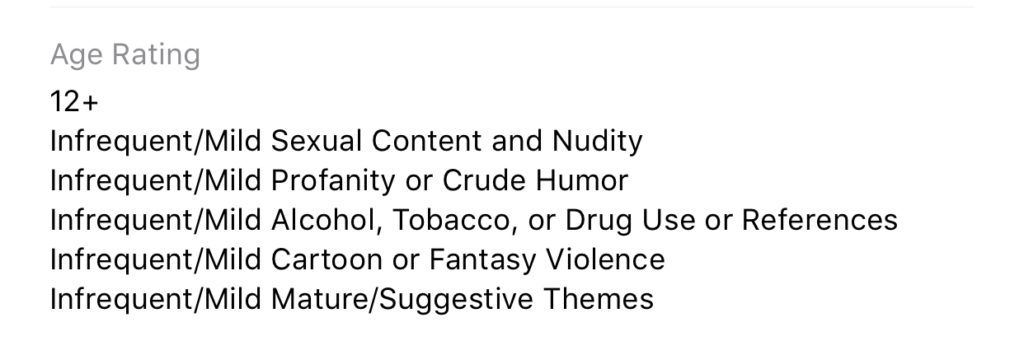

Of course you have great kids. I know they don’t want to do anything wrong online. They will not bully people, they won’t send inappropriate photos, and they are definitely not visiting adult websites. The problem with this logic is that they don’t have to seek out these things, they come to them. Two out of every three kids who see adult content for the first time saw it by accident and the average age a young man sees pornography for the first time is age 8! These are, most likely, children whose parents would consider “Good Kids.”

I sat and watched a young lady of seven years old create videos of herself and post them publicly on an app called Likee. I went to look at this app in the App Store and saw that it is rated 17+ because of the ability to post your videos publicly online. I guarantee mom didn’t know that app was posting videos that strangers can see online. Moms and dads trust their kids because they believe they’re going to do the right thing. The issue isn’t usually what your kid does online. Most of the time the problem is the strangers on the other side of that screen.

Your kids need you to care!

The worst thing we do, as parents, is decide that we can’t learn any more about the tech our kids are using. We cannot be fooled into thinking that the digital world is moving too fast for us to keep up. It does move fast, I understand, but there are resources that we can and should use to help us better wrap our minds around our children’s time on tech. Use FamilyTechBlog.com, our YouTube Channel, and Podcast to help you stay informed. Knowledge is definitely power. You need that power to keep your kids safe and help them develop healthy habits.

Secondly, we often get too focused on ourselves and what we need. While our homes shouldn’t be fully centered around our children, we have to set some boundaries and standards to protect our kids from the nonsense that the online world can provide. We should pay attention to what they do on social media and not let them use those apps until they are old enough to use them responsibly. We need to be knowledgable about the video games they play, the sites they visit, and who they communicate with online. We should learn all we can, every chance we get, to continue to keep our kids safe.

It is easy to get discouraged. We hear of the worst case scenario on the news almost daily. kids going missing, kids hurting themselves because of something they’ve seen online, and studies showing how damaging excessive screen time can be for our childrens’ brains. I advise that you don’t get discouraged but get inspired. Let this information drive you to learn more to protect your kids. Learn all you can and share what you learn with all of the parents you know. That’s the best way to protect our kids and help them build healthy habits.

Listen to the podcast here:

Podcast: Play in new window

Subscribe: Apple Podcasts | Spotify |