There were more than 30 instances of abuse of children from the Tinder and Grindr apps since 2015. That number may seem small but when you consider that fact that kids have easily skirted around the age requirements of these dating/hookup apps and made contact with people who wish to harm them, any number is too high. While these companies say they’re doing all they can to keep kids from using their software, all they really say in response to these horrible occurrences is that the predators and kids violated their terms and services. Since the terms say you shouldn’t contact minors and that minors shouldn’t be using their software, they claim the responsibility isn’t theirs because the child was put in danger by using the app in a way that it wasn’t intended to be used.

Officials are saying that isn’t good enough with law makers in the UK trying to create legislation that will require age verification on apps like Tinder and even some social media apps like Instagram. Recent suicides have been proven to be inspired by images of self harm that were viewed on Instagram. Again, officials at the social media company say that the most violent of the images violate their terms and services. They have recently, however, banned images of self harm and suicide and removed the categories from search results.

Here is the question: When these horrible things happen, do we blame the companies who make these online products? Is it enough to write a terms and agreements and say that those who break the rules do so at the fault of their own and no fault of the company? So far, legally, that’s all it takes. It seems that the responsibility of the company ends with the terms and conditions page. If the user doesn’t follow the terms, then how is the company supposed to protect users? Some officials are asking for age verification which means keeping more records. This is something many companies don’t want to do because of recent privacy and data breach concerns. There is only one thing I know for sure, if families will get serious about monitoring their kids’ screen time and online activity, the number of these occurrences will dramatically decrease.

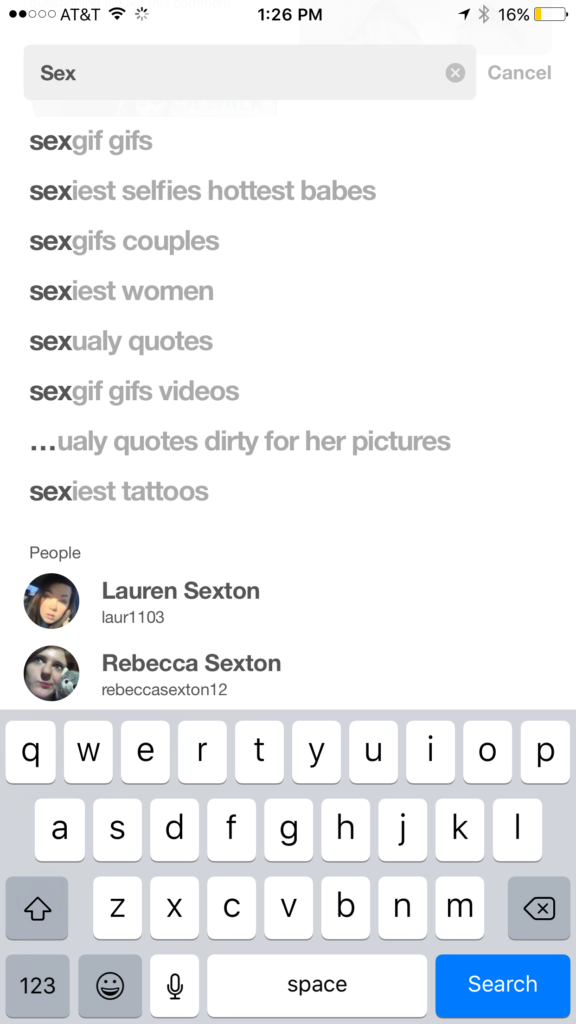

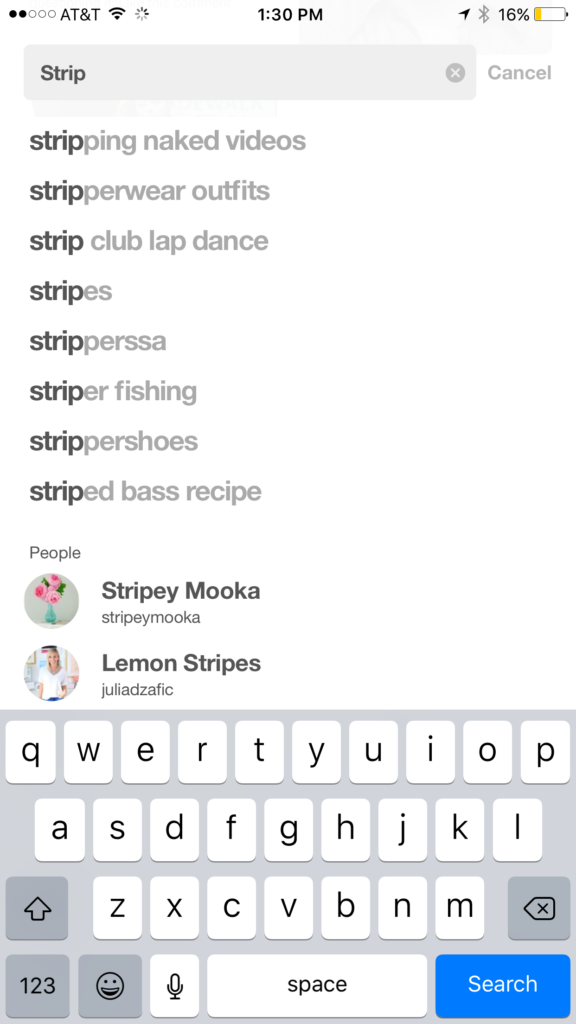

Let me describe a scenario for you. Your 12 year old child wants to meet new people online, maybe they heard some friends talking about a dating or hook up app, maybe they just don’t have a lot of friends in real life. Whatever the reason, they’re looking for a way to meet people. While they’re looking through the app store they see this in the search results:

They tap download, create a profile and start swiping. Eventually meeting new people on the app. Conversations move to WhatsApp, Facebook Messenger, or Signal and they schedule a meetup. Your imagination can take over from there and if you’ve read some of the news stories it can get pretty awful.

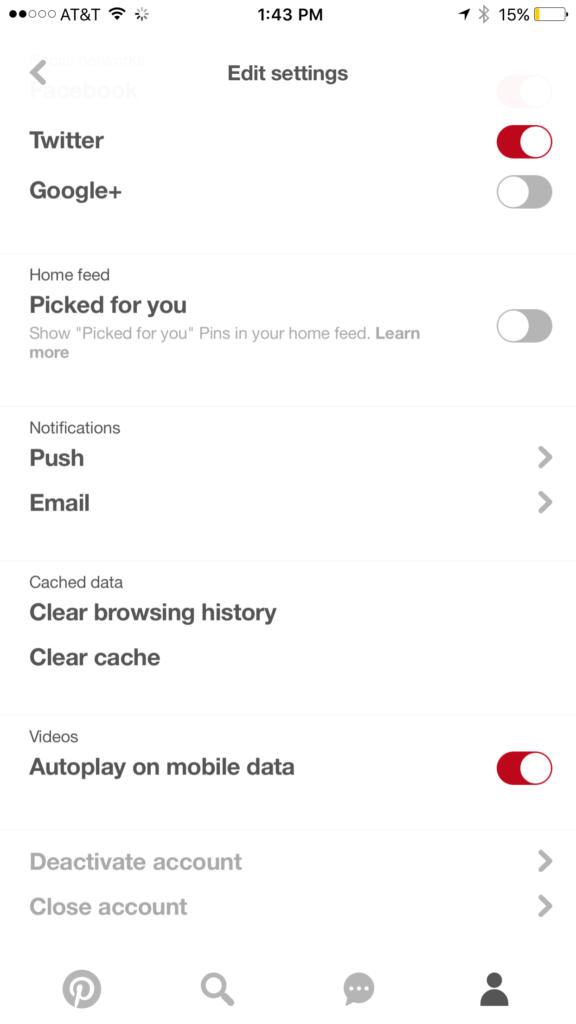

Imagine, now, that you have parental controls set so that your child has to request permission to download apps. Maybe you even have their controls set to keep them from downloading apps rated for users over 12 years of age. Either of these approaches would keep you from hearing about your child’s new friendship or worse, romantic relationship with a stranger online. Instead, you’ll see that they’re trying to download an app that is designed to connect people for romantic relationships and be able to discuss this with them. You can share the dangers of building relationships with strangers and help them understand the importance of privacy, security, and parental supervision.

There are built in ways to protect your child on both iOS and Android devices. The key is to set them up. Use the built in protections and features and don’t rely on these companies to protect your children. They don’t exist to keep your family safe or even to help people build healthy relationships. These companies develop their products to make money. It is foolish to expect Instagram to protect your kids from suicide, should they have a responsibility for what is on their app, yes, should you blame them if your kid harms themselves because they see something on the app, not entirely. You have to take some of the blame onto yourself. There are ways to keep your kids safe from that kind of content. If you don’t know about it or don’t use it, it isn’t the fault of the company. It’s yours. Be involved, pay attention, and do the work to keep them safe.