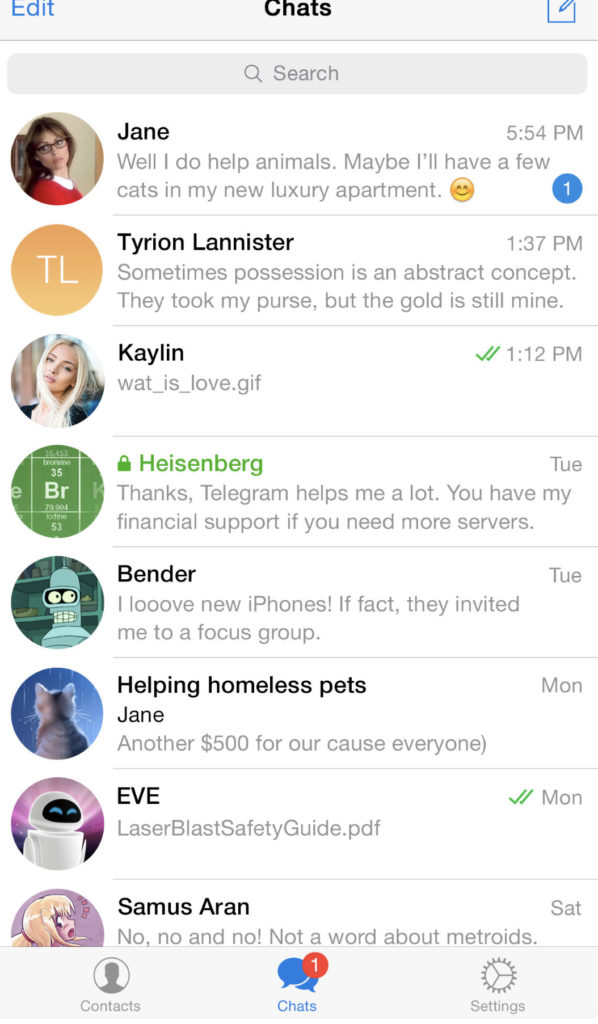

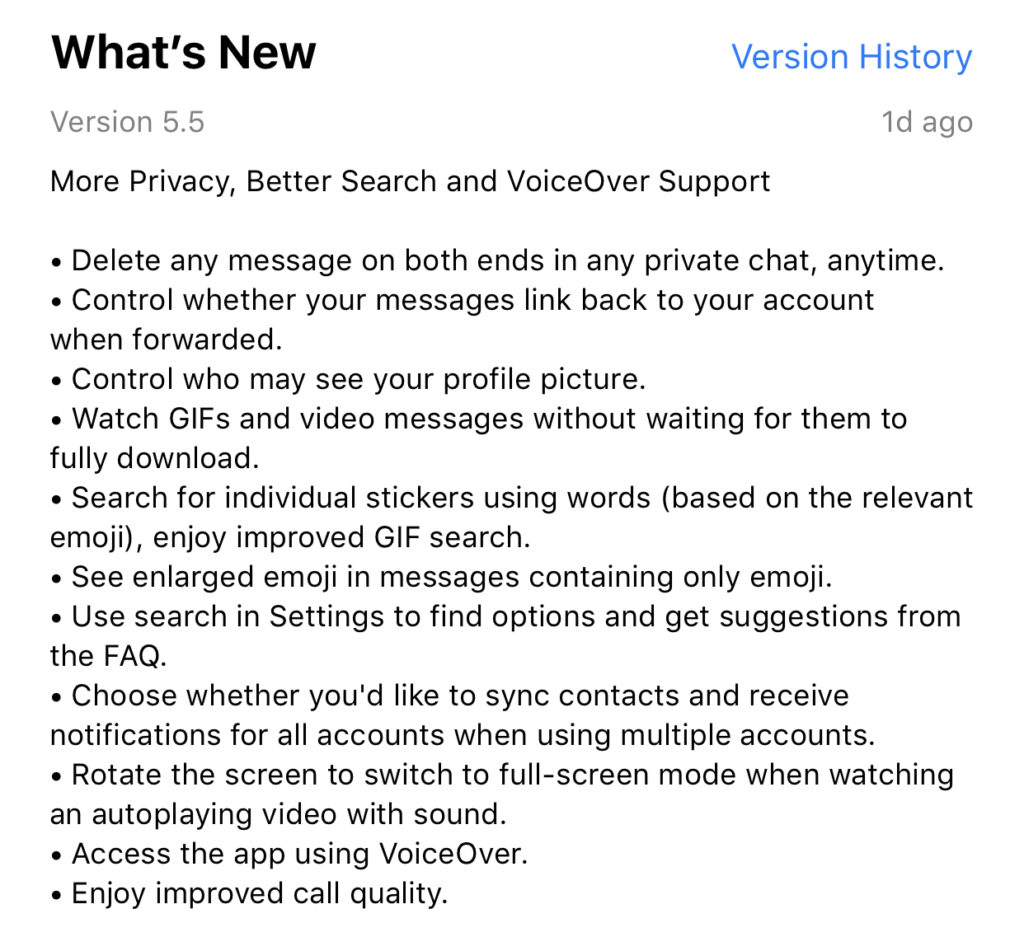

Telegram is an end to end encrypted messenger that touts speed, privacy, and security. They have featured private messaging and self destructing messages for a while but their new feature takes privacy to a new level. You can now delete a message you’ve sent from your account and the account you sent it to no matter how long ago it was sent. Telegram is, again, standing up for privacy and users are buying in. Millions have flocked to Telegram after Facebook’s data leak news from the past several months. It looks like Telegram is doubling down on Privacy as their claim to fame. They’ve also added the ability remove your information from a message when the message is forwarded to other users. Some accessibility and ease of use features have also been aded.

What Parents Should Know

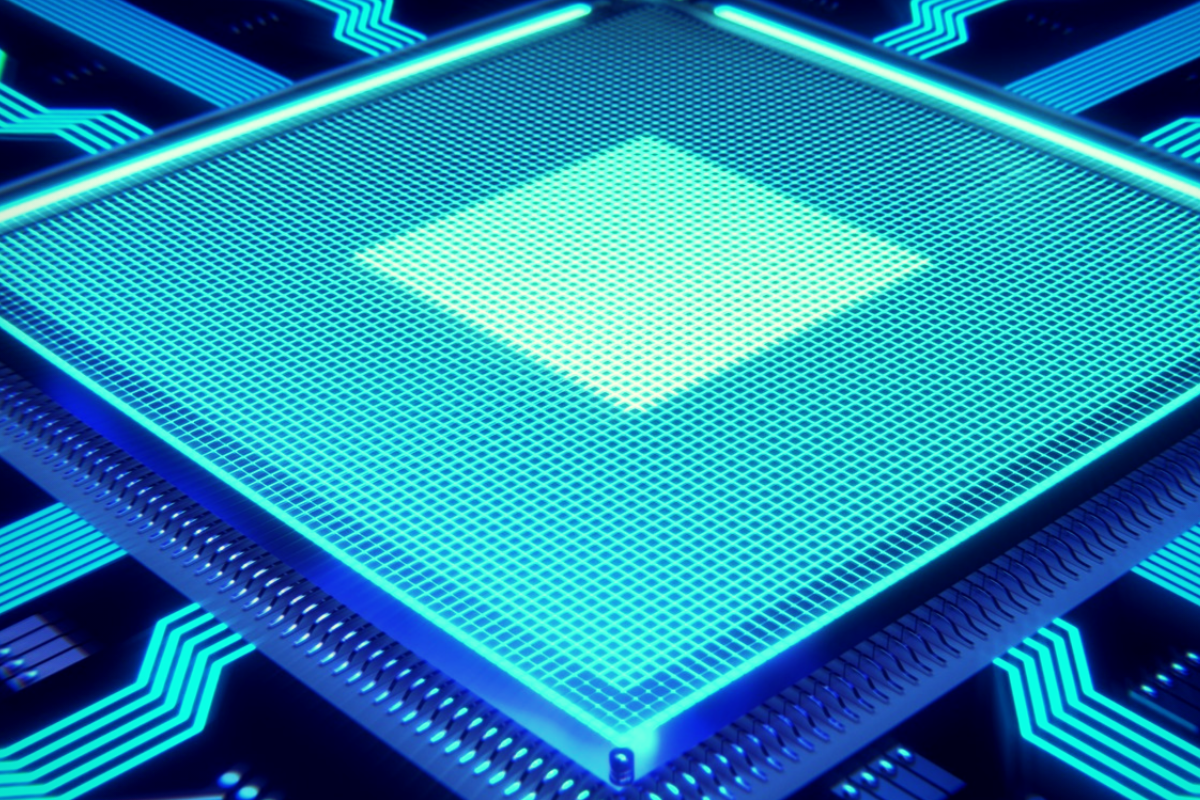

Security and privacy are often overlooked when we allow our kids to use internet connected devices. Privacy is becoming a major concern for experts and activists of family tech safety. Messengers that allow data to be collected and used for advertising shouldn’t be used by children and even teenagers due to the risks of such data being released or revealed without the messenger app developer’s consent. When an app features privacy as it’s distinquishing feature, you have to ask who the data is being kept private from. Obviously, we want data to be kept from third party companies who would use that data to advertise. Sometimes data is even kept private from the company that developed the messenger app that you are using. Telegram has a “secret messages” setting that must be set to keep your information encrypted from end to end. (End to end encryption means not only the company can see or collect what is being sent.)

Anytime the ability to delete messages you’ve sent is added, I see red flags. While I think privacy is critical, there is also a risk of kids thinking they are safe from inappropriate or incriminating photos or messages being saved and used for nefarious purposes. It only takes a half a second to screen shot a message or image on your screen. Most phones allow you to record your screen to a video very easily. This means that you are non always anonymous online. If you are sending messages to someone, thinking you have complete privacy, you are trusting that the person you’re sending the messages to has your privacy in mind as well. Telegram is an easy way for predators, cyberbullies, and those interested in sexting, to send and receive messages that do their damage and then are removed as evidence.

I have spoken to parents who have taken their kids to the police with complaints about people trying to groom them online but the police had no evidence because the messages had all been deleted. This is why a messenger makes the FamilyTechBlog uninstall list as soon as they add disappearing messages. It isn’t safe for your kids to chat with a feeling of anonymity or for them to chat with people who can send what they want and make the message go away after it’s been viewed. Telegram is rated 17+ and I fully agree with this rating. Private messengers that allow you to chat with anyone, anywhere shouldn’t be used by children and young teenagers. Especially when the messages can be removed at will.